Reducing Entropy in Modern Web Architecture: Service Endpoints

In the ever-evolving landscape of web development, the complexity of web applications continually increases. Today, most non-trivial web apps integrate a variety of attached services, including databases, third-party APIs, and containers for both front-end and backend operations. This modular approach empowers developers to produce software at an unprecedented pace while offering enterprise-grade features like scalability and resilience.

However, with the adoption of a more microservice-oriented architecture, the management of configurations across different services and environments has emerged as a notable challenge, especially at scale and in scenarios involving multiple domains or environments with varying requirements.

The Challenge of Configuration Management

In private networks, managing host names to reduce entropy between environments and domains is a feasible approach. However, classical network and system administration can be expensive and complicated. Consider a web application that comprises a database, an enterprise message bus, and several intercommunicating microservices. A common strategy to simplify configurations is to create hostnames with a standard environment identifier as a segment, leading to service connections configured as http://svc1.dev.local or http://svc1.test.local. While this clarifies endpoints, it does not significantly reduce entropy since each service still requires explicit endpoint configuration.

Kubernetes: Reducing Entropy

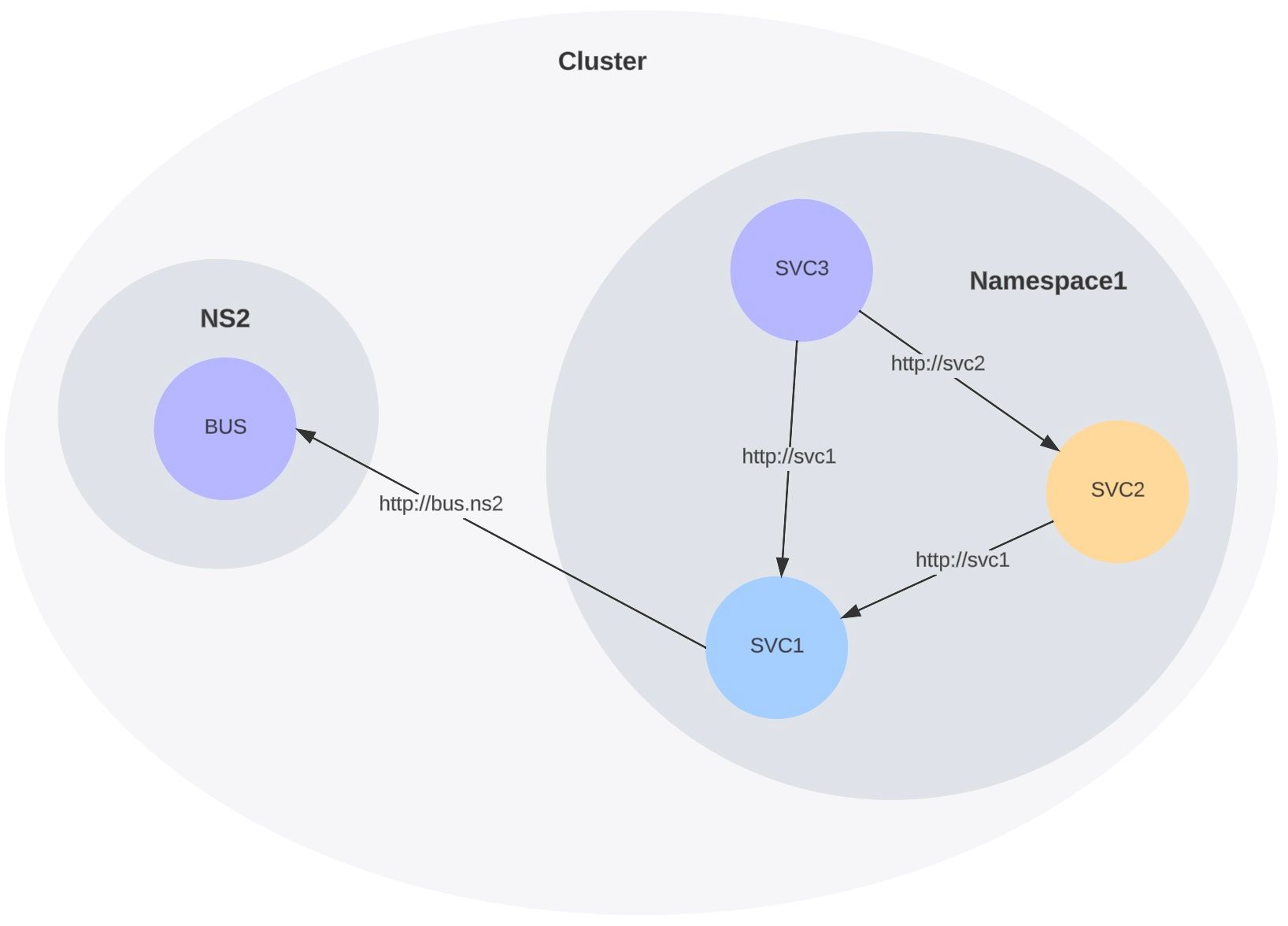

Kubernetes steps in as a potent solution to further simplify web architecture. It offers out-of-the-box namespacing and configurable DNS resolution, providing a more efficient way to manage configurations. Using Kubernetes, one can opt for either distinct namespaces within the same cluster or entirely separate clusters for different environments. This leads to a situation where service paths become "relative" to each other. For instance, a service reference could be as straightforward as http://svc1 within the same namespace, or http://svc1.othernamespace in a different one.

The beauty of this approach is in the portability of configurations across clusters.

Entropy reduction FTW!

This might seem like a small win, but it is profoundly impactful in the context of managing large-scale infrastructures.

The Blessing of Simplified Configuration

With Kubernetes, the daunting task of managing connection endpoints between services becomes substantially more manageable. This is particularly beneficial when deploying new stacks, such as setting up an "integration testing" environment. The simplicity and efficiency gained allow for a more streamlined and less error-prone process, ultimately leading to a more robust and maintainable web architecture.

Conclusion

The transition to a microservice-oriented approach in web development has brought with it the challenge of managing complex configurations. Kubernetes offers a powerful solution to this challenge, significantly reducing the entropy inherent in such architectures. By simplifying configurations and making them more portable, Kubernetes enables developers and system administrators to focus more on innovation and less on the intricacies of infrastructure management, marking a significant step forward in the field of web architecture.

There are many other great ways to leverage Kubernetes and supporting software to reduce entropy and we'll cover some in future posts!